Why Traceability Matters in AI-Enabled Systems

|

|

Category: Risk & Governance Author: Adam Clark Miller Publish date: 2026-04-07 |

Traceability is the record of how work moved through the system

If a system helps classify, validate, recommend, or route meaningful work, teams need to know what happened, why it happened, and how it can be reviewed.

That is traceability in practice.

It is also one of the fastest ways to distinguish a serious operational system from a shallow AI add-on. In low-stakes environments, lack of traceability may be an inconvenience. In higher-consequence workflows, it becomes a structural weakness. When decisions affect money, eligibility, approvals, compliance posture, service quality, or downstream operations, the absence of a clear record makes it harder to trust the system, harder to correct it, and harder to govern it responsibly.

Traceability should not be treated as a late-stage add-on

Traceability is sometimes described as an enterprise requirement, which can make it sound like a late-stage add-on. In reality, it is much more basic than that. It is the system’s ability to preserve the path of work in a form people can understand.

- What information came in?

- What did the system identify or infer?

- What action was taken next?

- Was the result accepted automatically or escalated?

- Did a person override it?

- On what basis?

- What changed in the record afterward?

Without answers to those questions, a workflow may still function for a time, but it becomes progressively harder to manage.

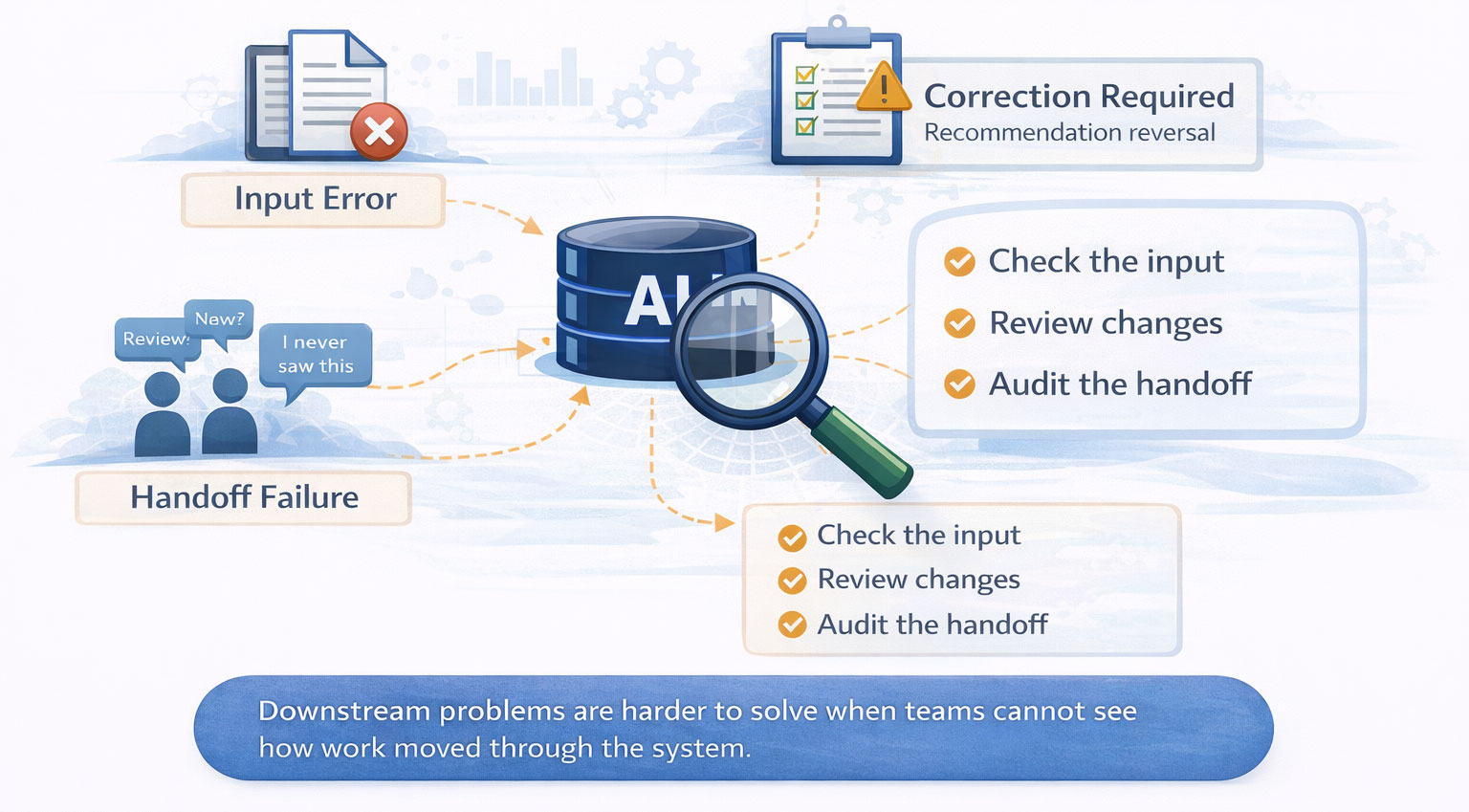

Operational debugging depends on traceability

This matters first at the operational level. When teams cannot see how work moved through the system, ordinary problem-solving becomes harder than it should be. A downstream issue appears, but no one can tell whether the root cause was a bad input, a misclassification, an incorrect rule application, a human override, or a handoff failure.

That is one reason traceability is not only about compliance. It is also about operational debugging.

- If a case was routed incorrectly, you need to know why.

- If a recommendation was accepted and later reversed, you need to know who intervened and under what reasoning.

- If exceptions are growing in one category, you need to see the pattern.

- If users are repeatedly correcting the same type of extracted field, that may indicate a workflow design issue, a source-quality problem, or a model weakness.

Traceability is what makes those patterns visible.

Traceability supports calibrated trust

It also plays a central role in trust. Teams are much more likely to rely on a system when they can inspect its behavior. This does not mean every user needs to understand every technical detail of a model. It means the workflow itself should be legible enough that staff can see what happened and respond intelligently.

A traceable system supports calibrated trust. It gives the organization a record.

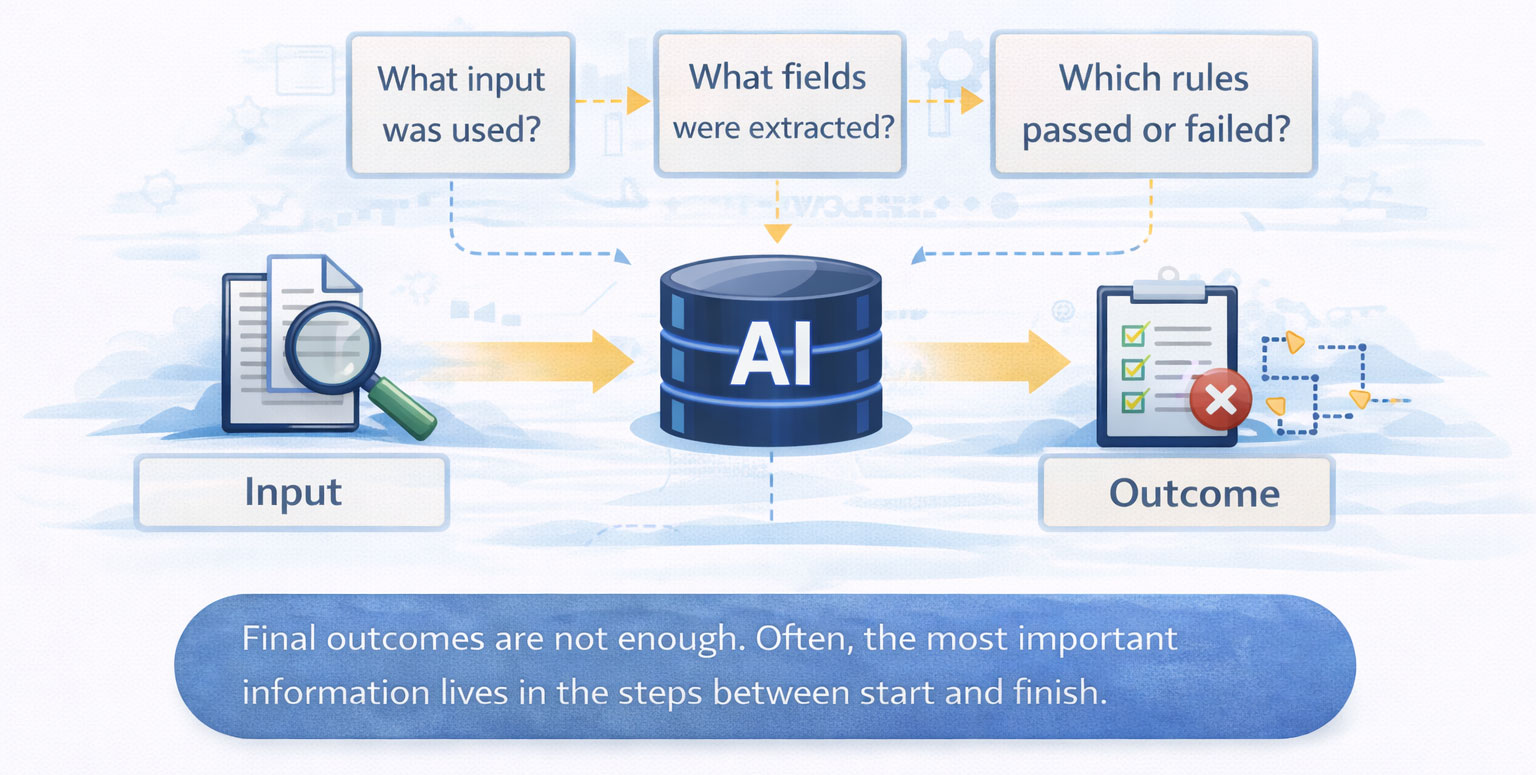

The most important trace data often lives in the middle of the workflow

That record usually needs to include more than final outcomes. In many AI-enabled workflows, the most important information lives in the middle of the process.

- What input was used?

- What document was classified as what type?

- What fields were extracted?

- What confidence or uncertainty indicators were present?

- Which business rules passed or failed?

- Was the case escalated?

- Who reviewed it?

- What was changed during review?

- What state transition happened next?

These are not technical luxuries. They are part of the control structure.

Poorly logged overrides weaken the system over time

The same is true of overrides. Human review is often treated as the safe fallback, but unless overrides are logged well, the system becomes harder to interpret over time.

Traceability is a governance requirement in real-world operations

This is where traceability becomes a governance requirement. Any organization operating in a regulated, audited, or high-accountability environment eventually needs to answer questions about how work was handled. If AI is involved anywhere in that chain, the demand for clear review history and decision context only increases.

A system does not become more defensible because it uses AI. It becomes more defensible when it can show how AI was used, where humans intervened, and how the workflow was controlled.

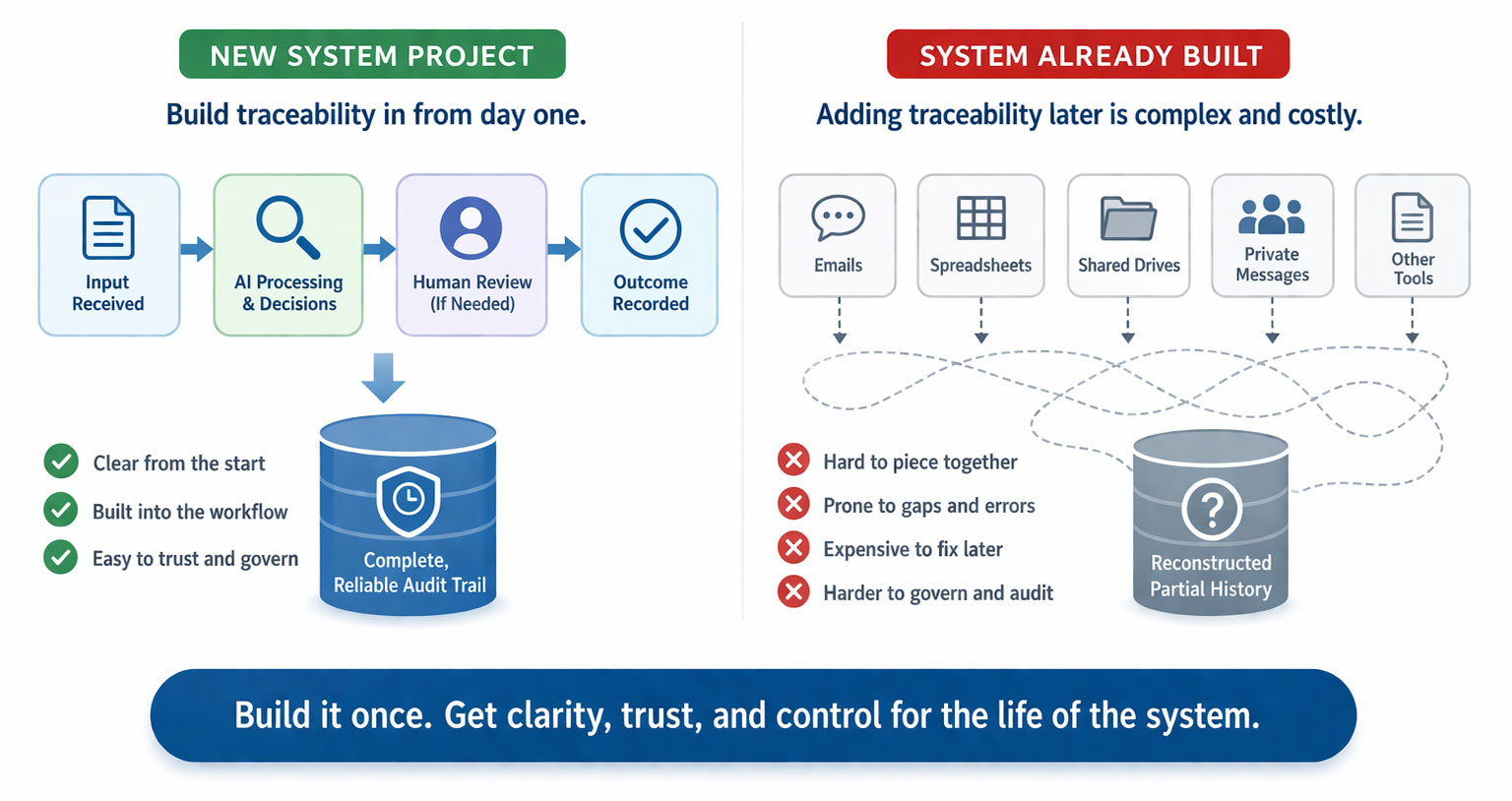

Traceability is easiest to build at the beginning

That is why traceability should be designed from the start rather than layered on afterward. Once a system has been built without meaningful event history, state visibility, and review capture, reconstructing those capabilities can be far more difficult than teams expect.

Leaders should ask how quickly the system can be understood when something goes wrong

For leadership teams, this is one of the clearest questions to ask when evaluating AI-enabled operations: if something goes wrong, how quickly can we understand what happened?

If the answer is vague, traceability is weak.

If the answer depends on reconstructing events across multiple tools and private messages, traceability is weaker still.

Legibility is what makes AI-enabled workflows governable over time

If a workflow is important enough to automate or augment with AI, it is important enough to make legible. Traceability is how that legibility is preserved over time.

Share this article:

If your organization is facing real operational complexity and needs clarity before building, the next step is a Systems Discovery conversation.

All serious engagements with SongSwift begin there.

If your organization is facing real operational complexity and needs clarity before building, the next step is a Systems Discovery conversation.

All serious engagements with SongSwift begin there.