Designing Human Escalation Paths for AI Systems

|

|

Category: Operational AI Author: Adam Clark Miller Publish date: 2026-04-03 |

The real design question is not whether humans remain involved in AI workflows. It is where they intervene, under what conditions, and with what authority.

Human involvement is not a design strategy by itself

A great deal of discussion about AI still treats “human in the loop” as a reassuring phrase rather than as a system design requirement. That makes it sound like a broad principle: keep a person involved somewhere, and the workflow becomes safer. In practice, that is too vague to be useful.

A human escalation path only works when it is designed with precision.

If the system does not know when to stop, what to surface, who owns the next decision, and how the outcome should be recorded, then the human presence is not really part of the architecture. It is an informal fallback. Informal fallbacks are one of the main reasons operations become slow, opaque, and difficult to trust.

AI often exposes uncertainty rather than removing it

This matters because AI systems are often strongest in the middle ground between certainty and ambiguity. They can classify likely document types, extract fields, detect patterns, draft outputs, recommend actions, and identify anomalies. But they do not eliminate uncertainty. In many workflows, they reveal it more efficiently.

That is a good thing, provided the workflow has somewhere for uncertainty to go.

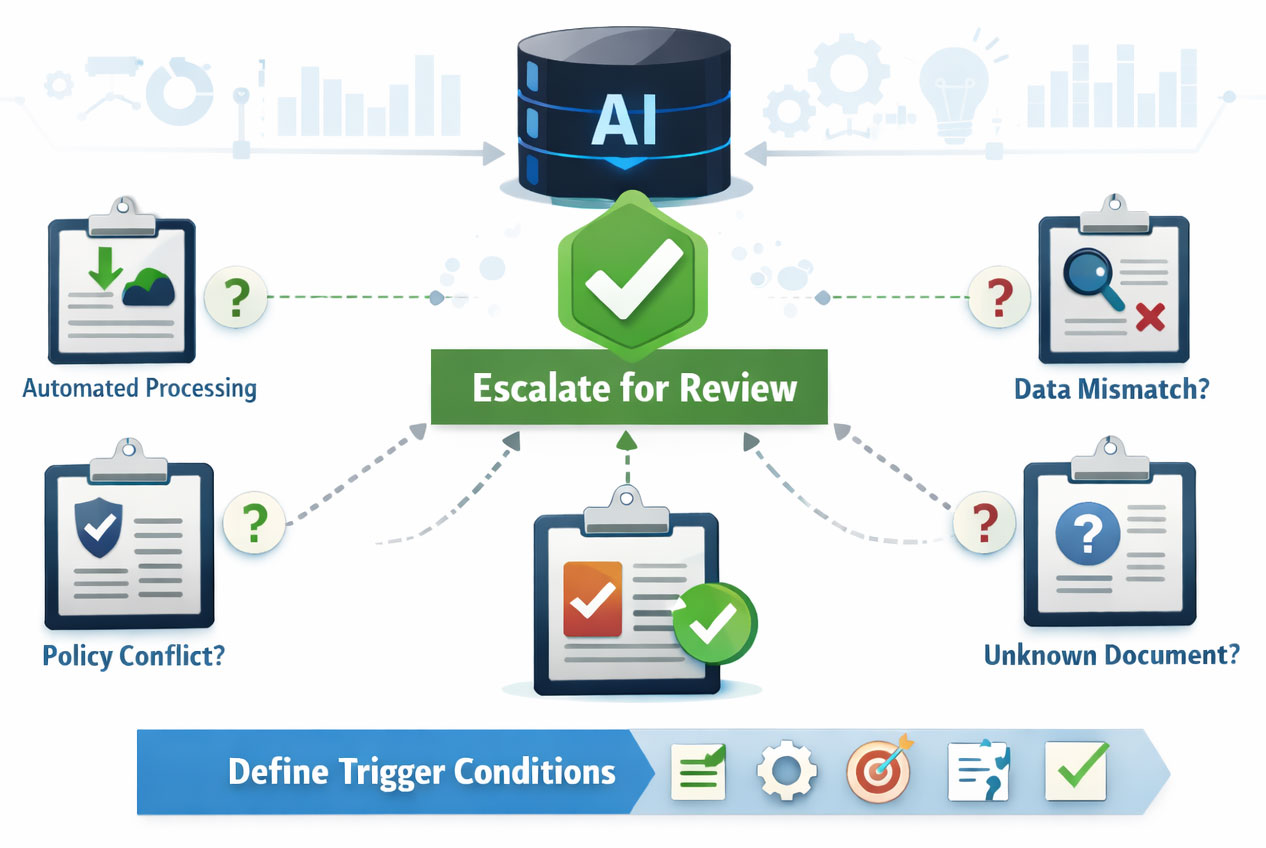

Escalation starts with explicit thresholds

An escalation path begins with thresholds.

Some cases are straightforward enough to proceed automatically. Others are not. The system may encounter incomplete inputs, conflicting values, unusual combinations, policy-sensitive content, or low-confidence interpretations. At that point, the question is not whether the AI failed. The question is whether the system has a disciplined way to respond.

This is where many implementations become fragile. Teams deploy AI into a workflow without deciding what kind of uncertainty matters, how it will be surfaced, and what action should follow. Users are then left to interpret odd behavior manually, often inconsistently. One reviewer may pass a case through. Another may escalate. A third may rework the data silently.

Intervention points should be defined in advance

A better approach defines intervention points in advance.

What conditions should trigger review?

- Low model confidence?

- Missing required information?

- Mismatch across sources?

- Detected policy conflict?

- Unrecognized document type?

These conditions should not be left to instinct. They should be represented intentionally inside the system.

Escalation only works when ownership is clear

Once a review is triggered, role ownership becomes critical. Not every human reviewer should handle every kind of exception. Some issues may belong to an intake specialist. Others may require compliance review, manager approval, legal input, or a domain expert. If the escalation path does not reflect real decision ownership, the system will simply move uncertainty around rather than resolve it.

Queues are part of the control structure

Queues matter here as well. A queue is not just a bucket for unresolved work. It is part of the control structure. The system should distinguish between types of exception, route them intelligently, preserve context, and make clear what the reviewer is being asked to determine.

Good escalation improves efficiency

Thoughtful escalation often improves efficiency rather than reducing it. When escalation is targeted, only the right cases are surfaced. Straightforward items continue. Ambiguous items receive focused attention. Reviewers spend less time rediscovering context because the system has already assembled the relevant information and framed the decision.

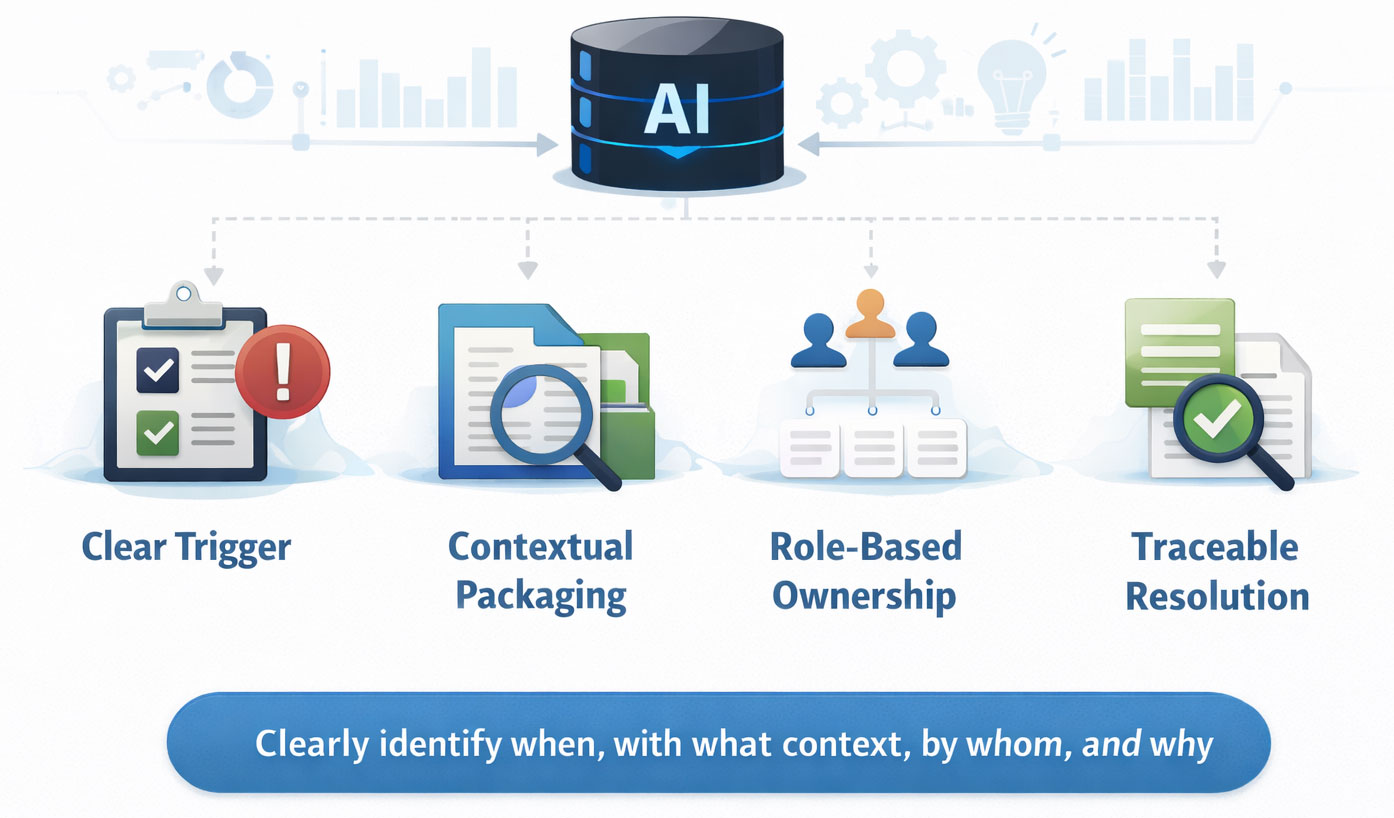

Strong escalation design has four core elements

In practice, good escalation design usually includes four elements: a clear trigger, contextual packaging, role-based ownership, and traceable resolution.

Human review must be documented, not assumed

That last part is especially important. In many organizations, human review is treated as the safe fallback, but the review itself is poorly documented. An override happens, an exception is approved, or a value is corrected, but the reason lives only in memory or in an unstructured note. Over time, that creates its own form of operational blindness.

If human intervention matters enough to protect the workflow, it matters enough to be captured.

Escalation should feed organizational learning

This is also where escalation becomes part of organizational learning. Overridden recommendations, recurring exception types, and repeated uncertainty patterns can reveal where the workflow needs adjustment, where business rules need clarification, or where the AI component needs improvement.

Respect human judgment by designing for it

The strongest AI workflows are not the ones that try to eliminate human judgment. They are the ones that respect it enough to design around it properly.

If your AI workflow affects meaningful decisions, escalation paths should be designed up front, not added after confidence in the system starts to erode.

Share this article:

If your organization is facing real operational complexity and needs clarity before building, the next step is a Systems Discovery conversation.

All serious engagements with SongSwift begin there.

If your organization is facing real operational complexity and needs clarity before building, the next step is a Systems Discovery conversation.

All serious engagements with SongSwift begin there.